By Prof. Nathalie DEVILLIER, Ph.D. in International Law

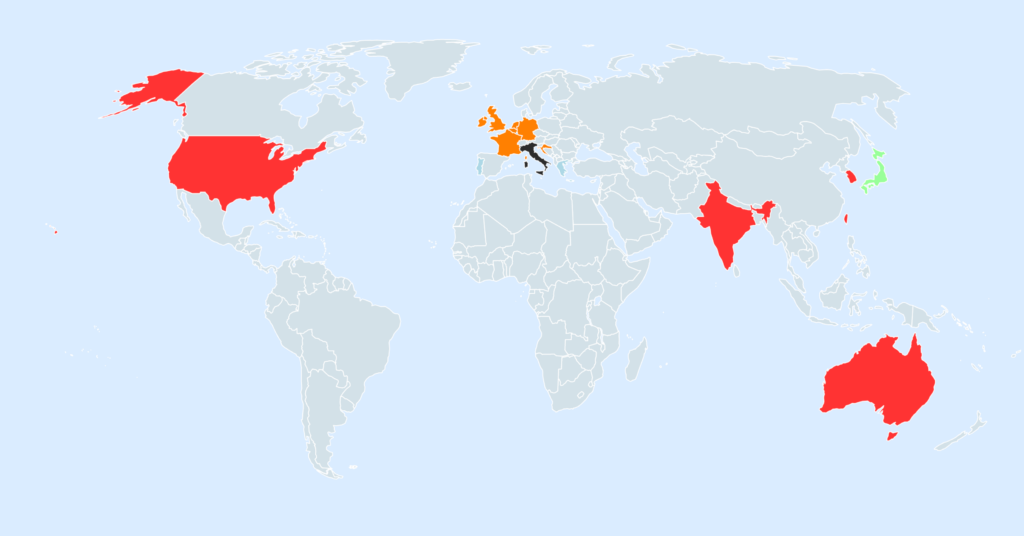

DeepSeek, an emerging Chinese player in generative artificial intelligence, is at the center of regulatory controversies in several countries. Recent decisions by data protection authorities in Italy, Japan, South Korea, and India highlight the growing tensions between technological innovation and compliance with national and international regulatory frameworks.

These measures demonstrate that regulatory authorities, citizens, and digital rights organizations are becoming increasingly uncompromising when it comes to fundamental rights and privacy. Even the slightest lapse in transparency or compliance can lead to severe restrictions, jeopardizing market access and causing lasting damage to the reputation of industry players.

Italy: A ban that sends a strong message

Italy has taken an unequivocal stance by prohibiting DeepSeek operators from processing data within its territory (Source). This ban, issued by the Italian data protection authority, is consistent with Italy’s approach, which had already ordered a temporary suspension of ChatGPT in 2023 due to data collection deemed non-compliant with the General Data Protection Regulation (GDPR).

In the case of DeepSeek, Italian authorities cite serious violations of the fundamental principles of the GDPR, particularly regarding transparency and user consent.

Japan has announced that the issue will be addressed in collaboration with other countries

In Japan, the Personal Information Protection Commission has issued an official warning regarding DeepSeek’s data collection practices. This warning comes as Japan seeks to strengthen its data protection standards to comply with the requirements of its trading partners, particularly the European Union. The main criticism leveled at DeepSeek concerns the lack of transparency in its data processing and the absence of clear mechanisms allowing users to exercise their privacy rights. Japanese authorities emphasize the need for greater transparency and improved access to information regarding how data is used, stored, and shared.

Last Thursday, Japanese Chief Cabinet Secretary Yoshimasa Hayashi stated that the Japanese government “will handle the matter appropriately, while cooperating with data protection authorities in other countries” (Source).

South Korea: Investigation into Data Management

In South Korea, the Personal Information Protection Commission has announced its intention to request detailed information regarding DeepSeek’s handling of personal data. The aim is to assess whether the company complies with the fundamental principles of the Personal Information Protection Act (PIPA), which is one of the most rigorous data protection frameworks in Asia. This initiative is part of a broader effort to ensure that foreign technology companies operating in South Korea adhere to high standards of data protection. South Korea has recently strengthened its legislative framework in response to growing concerns regarding cybersecurity and the misuse of personal data through offensive tactics (Source).

India: An Attempt at Compromise?

India, for its part, is taking a different approach by seeking to strike a balance between data protection and technological innovation. The Indian government proposes to host DeepSeek locally in order to alleviate concerns regarding data sovereignty (Source). This solution would aim to ensure that Indian users’ information remains under the control of local authorities, thereby reducing the risks associated with its exploitation by foreign entities. This proposal to mandate local data storage is already in place in China and Russia.

The list of investigations opened against DeepSeek around the world doesn’t end there: Australia, Belgium, Croatia, France, Ireland, Luxembourg, Taiwan…

note2map

All of the proceedings can be viewed here on note2map, published by Australian lawyer and new technology specialist Raymond SUN.

The issue goes beyond data protection, as cybersecurity researchers have discovered hidden code in the web version of Deepseek that links it to the IT systems of China Mobile, a Chinese telecommunications operator with close ties to the Chinese military (Source).

To date, only NASA has blocked DeepSeek for "security and privacy reasons" (Source).

A global model in need of reinvention

Taken together, these decisions highlight the growing regulatory compliance challenges facing artificial intelligence companies. The coexistence of diverse and sometimes conflicting national legal frameworks poses a barrier for companies seeking to operate on a global scale.

The same applies to AI. The European Regulation on Artificial Intelligence ((EU) 2024/1689) introduced a classification of AI systems based on their risk level, placing the European Union at the forefront of AI regulation. In contrast, China seeks to regulate the use of artificial intelligence from a security and personal data control perspective, while India supports a “pro-innovation” approach to AI regulation while taking anticipated risks into account.

The DeepSeek case thus illustrates the impact of fragmented national laws on companies operating in different jurisdictions. Some companies are attempting to address these challenges by adopting region-specific compliance policies. The proliferation of regulations and oversight requirements is forcing industry players to rethink their data collection and processing models.

The current trend shows that companies that fail to adapt to growing data protection requirements risk having their access to certain markets restricted or even completely barred.

DeepSeek, which has faced bans in Italy, warnings in Japan and South Korea, and a proposal for local data storage in India, is a textbook example of the tensions between technological innovation and regulatory compliance.

The issue of user trust is also crucial. The ability of artificial intelligence companies to ensure data protection and respect fundamental rights determines their social acceptability and long-term success. Recent decisions against DeepSeek illustrate the growing importance attached to these issues by regulatory authorities, as well as by citizens and digital rights organizations.

Changes to these regulations and their implementation in the coming years will have a decisive impact on the development of generative artificial intelligence. It is likely that companies in the sector will not only have to invest more in bringing their practices into compliance, but also anticipate the harmonization of rules on an international scale, which appears to be necessary to avoid excessive market fragmentation. However, differences in approach among major economic powers suggest that this harmonization will remain a significant challenge for years to come.

Well done on this insightful article, which accurately highlights the delicate balance between AI innovation and rigorous data processing. This is a necessary discussion in support of regulatory harmonization to ensure an ethical and secure technological future.